Your E2E tests are unreliable? Here's why

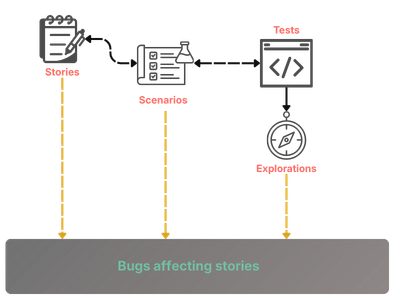

End-to-end tests are a necessary evil: they are the last line of defense that something actually works in a real browser—but they break often enough that the suite becomes a burden instead of a trustworthy signal.

There are three main sources of variance that make E2E tests unreliable. Understanding them is the first step toward a suite you can actually rely on.

1. World-state variance

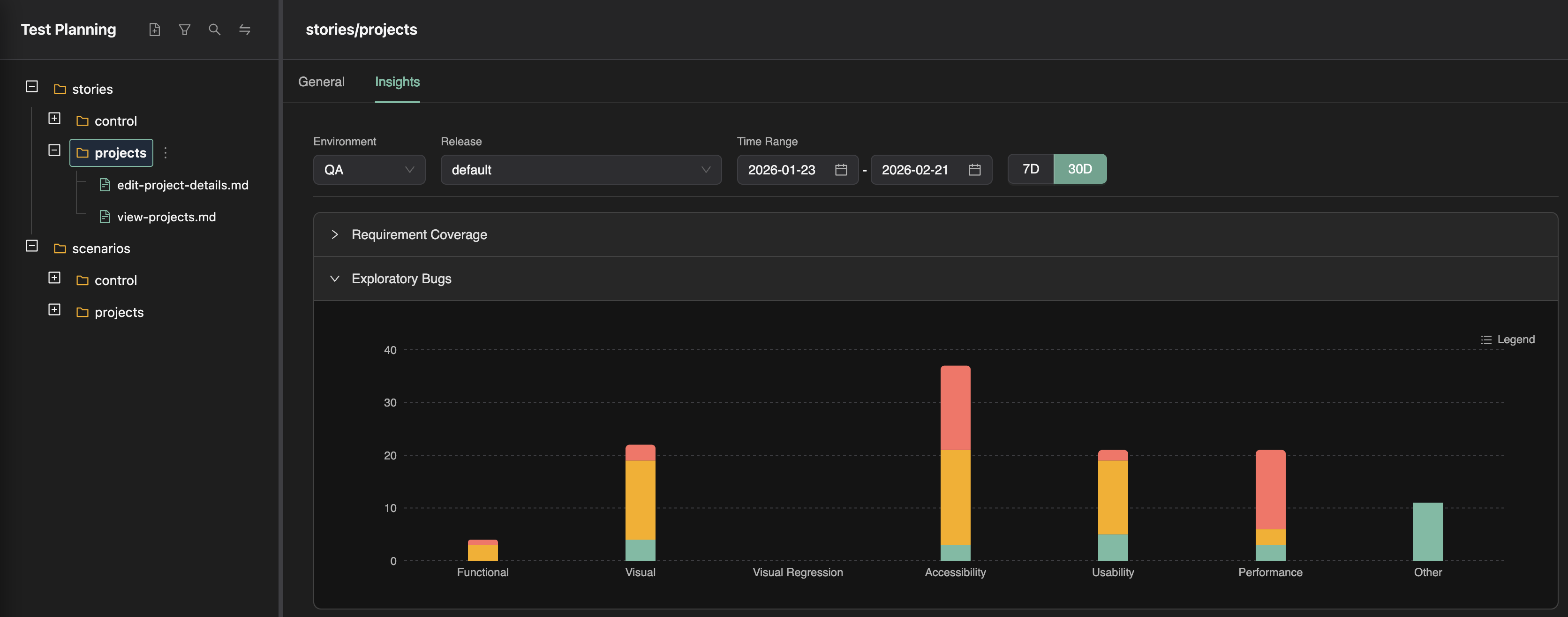

This is what happens when your tests run in a different world-state than the one they were written against. A common cause is a shared environment where manual testing and automated runs both happen. The world changes between runs; the next run fails for reasons that have nothing to do with the code under test.

This kind of variance does more than flake tests. It also slows feedback: if those environments only get updates after PRs merge to main, you get weaker root-cause isolation and more expensive triage when something breaks.

2. System variance

These are variances built into the stack itself: network latency, transient failures, UI paint timing, and so on. Mature frameworks like Playwright address a lot of this with built-in waiting, auto-waiting locators, and expect polling—so a big slice of “flakiness” is really tooling and patterns, not fate.

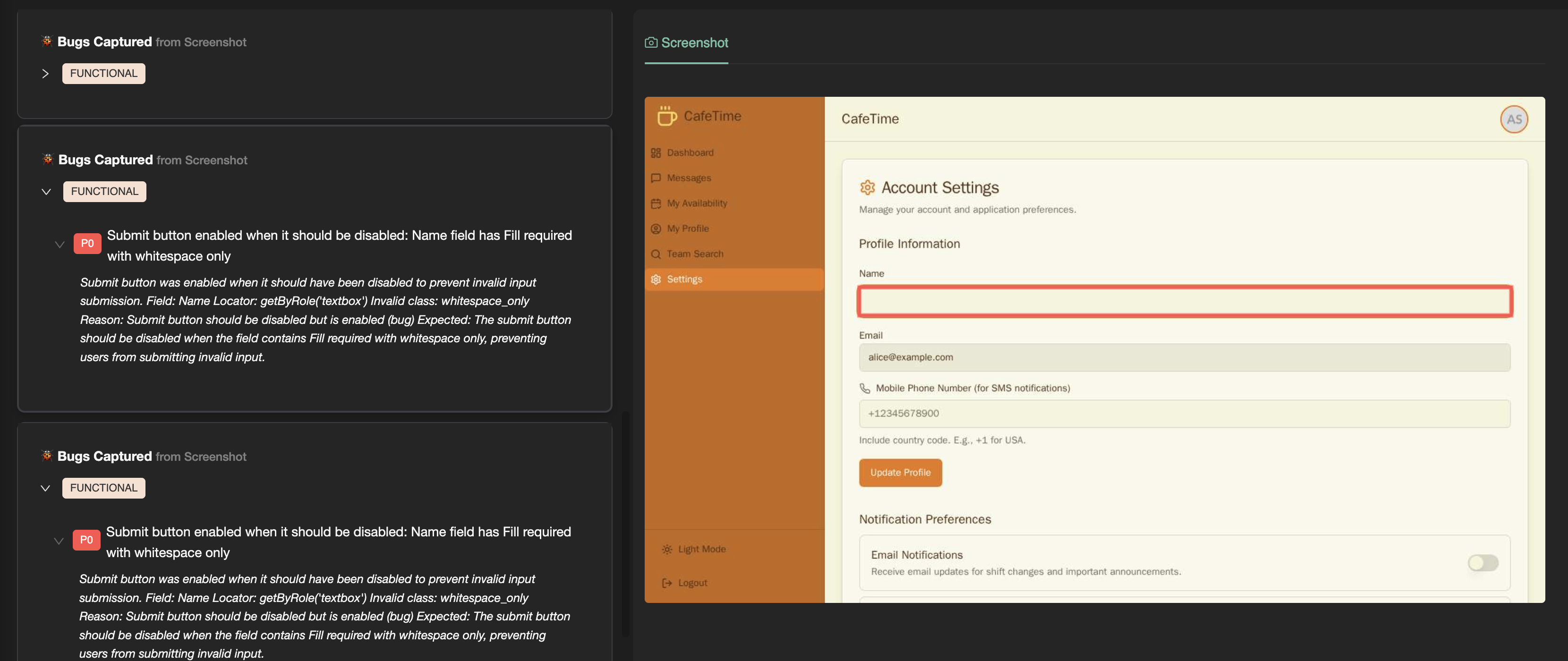

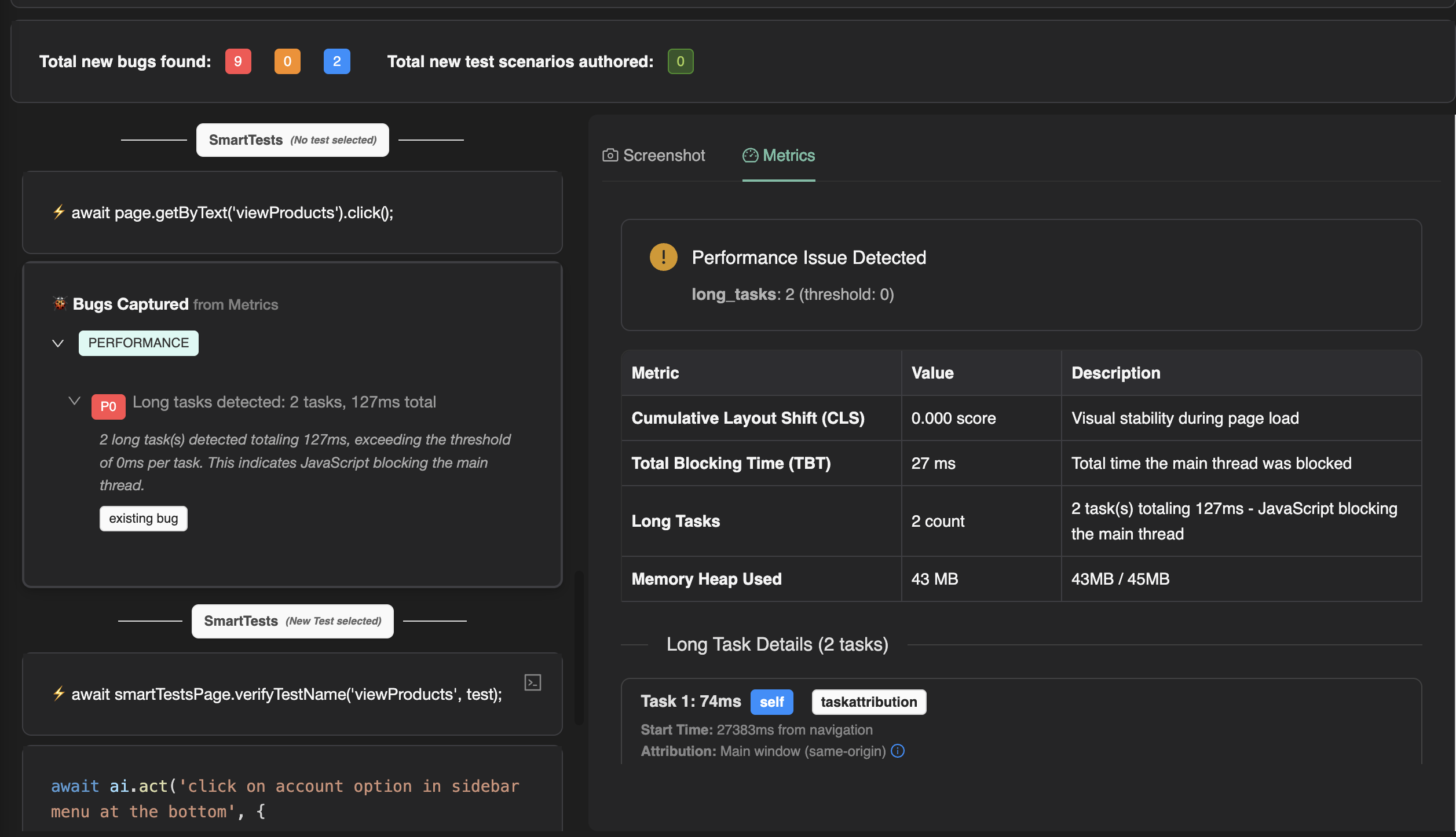

3. Product variance

Even in a steady state (with no product change) — modern web apps are not as simple as calculators. Behavior can be inherently non-deterministic, and that is only more true now that AI often sits in the user journey (for example, a splash or offer that appears only sometimes). Much of that variance may be irrelevant to what a given test is trying to prove.

When tests are authored with a fragile, UI-selector-heavy approach, those product-level variances show up as broken steps. The test is coupled to incidental UI, not to intent.

Solve for these three kinds of variance, and you get a suite that is finally trustworthy.

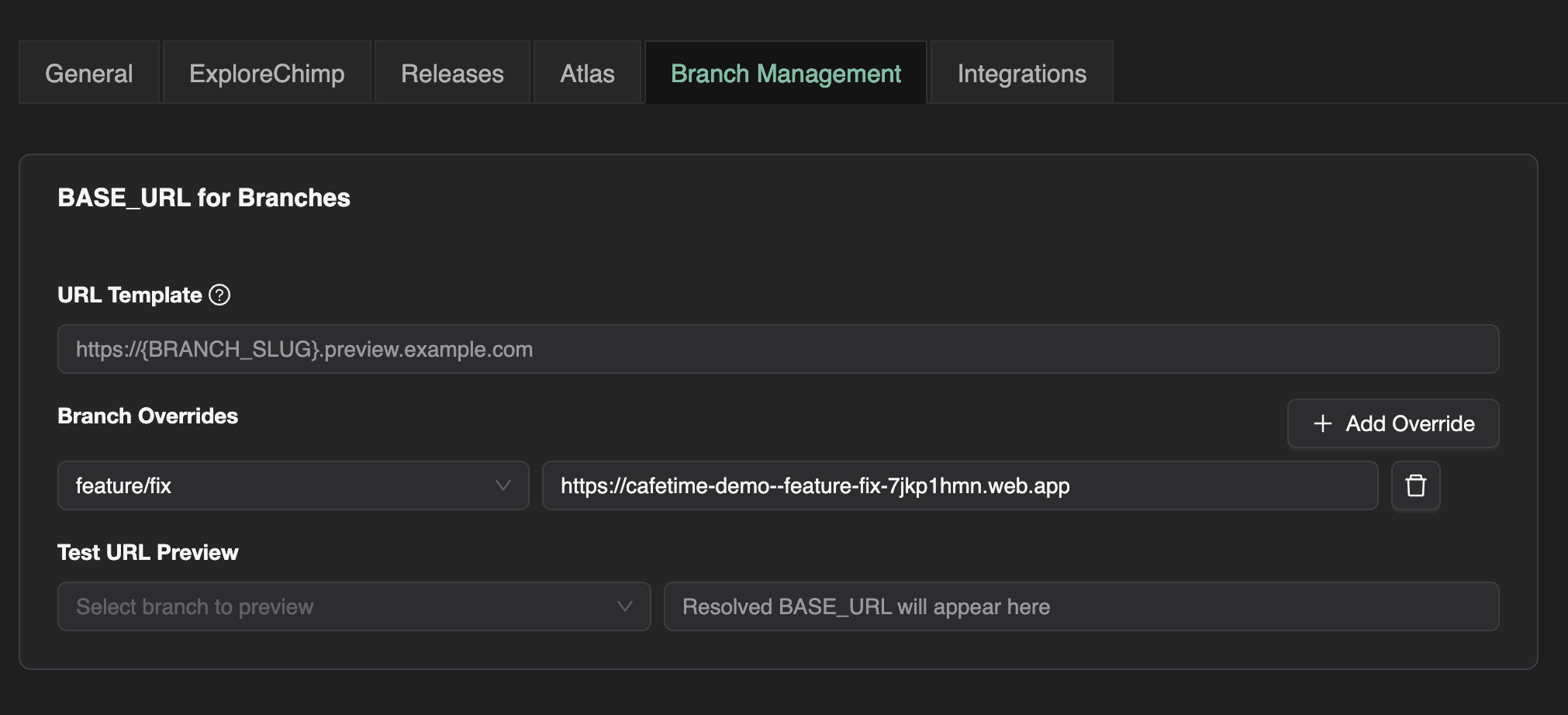

World-state variance → controlled environments

Use ephemeral environments loaded into known, predefined world-states, so each run matches the state the test was authored against as closely as possible.

System variance → solid automation primitives

This is largely where mature frameworks shine. With Playwright, you get strong primitives for timing and stability—so you are not reinventing waits on every test.

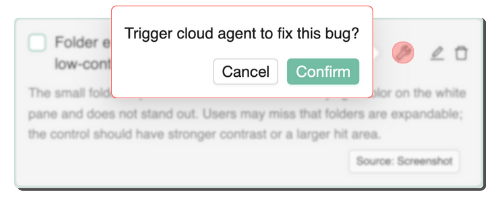

Product variance → intent where the UI is messy

This is where agentic steps in tests can help: natural-language instructions executed by an AI, instead of brittle coupling to selectors—only on the messy, flaky parts of the flow.

There is no free lunch: natural-language steps tend to be slower, costlier, and harder to debug than plain script. The goal is to use them surgically, not everywhere.

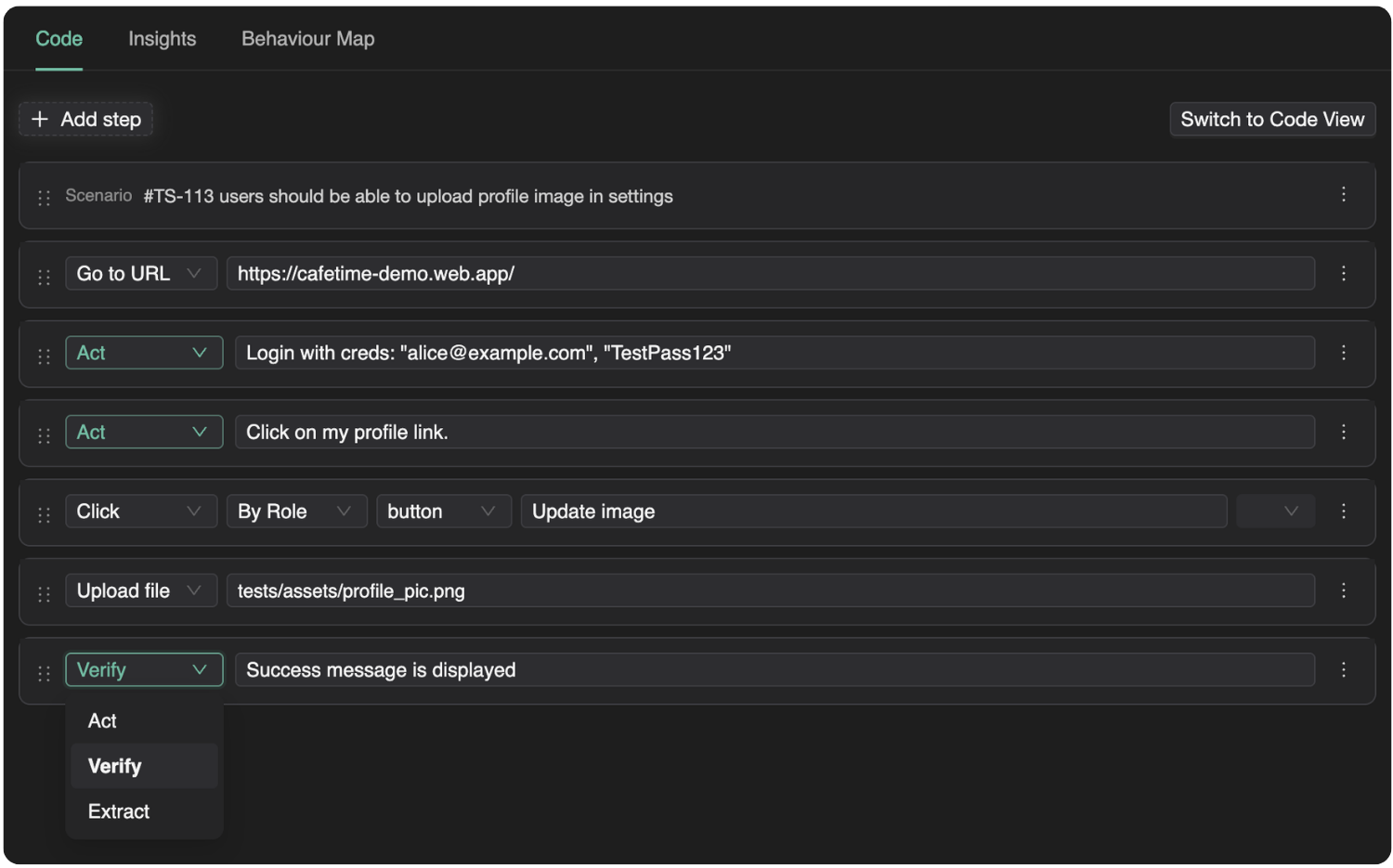

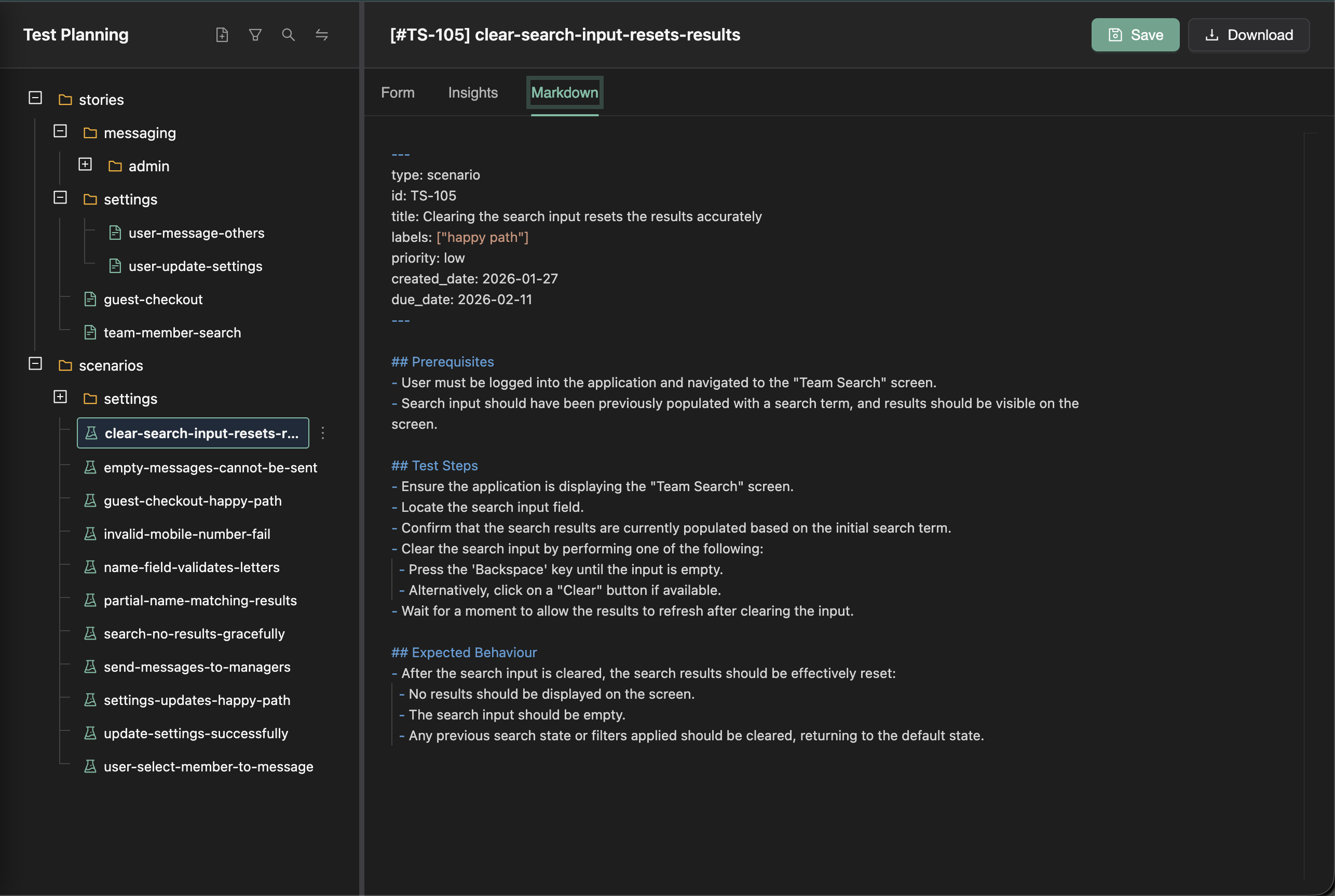

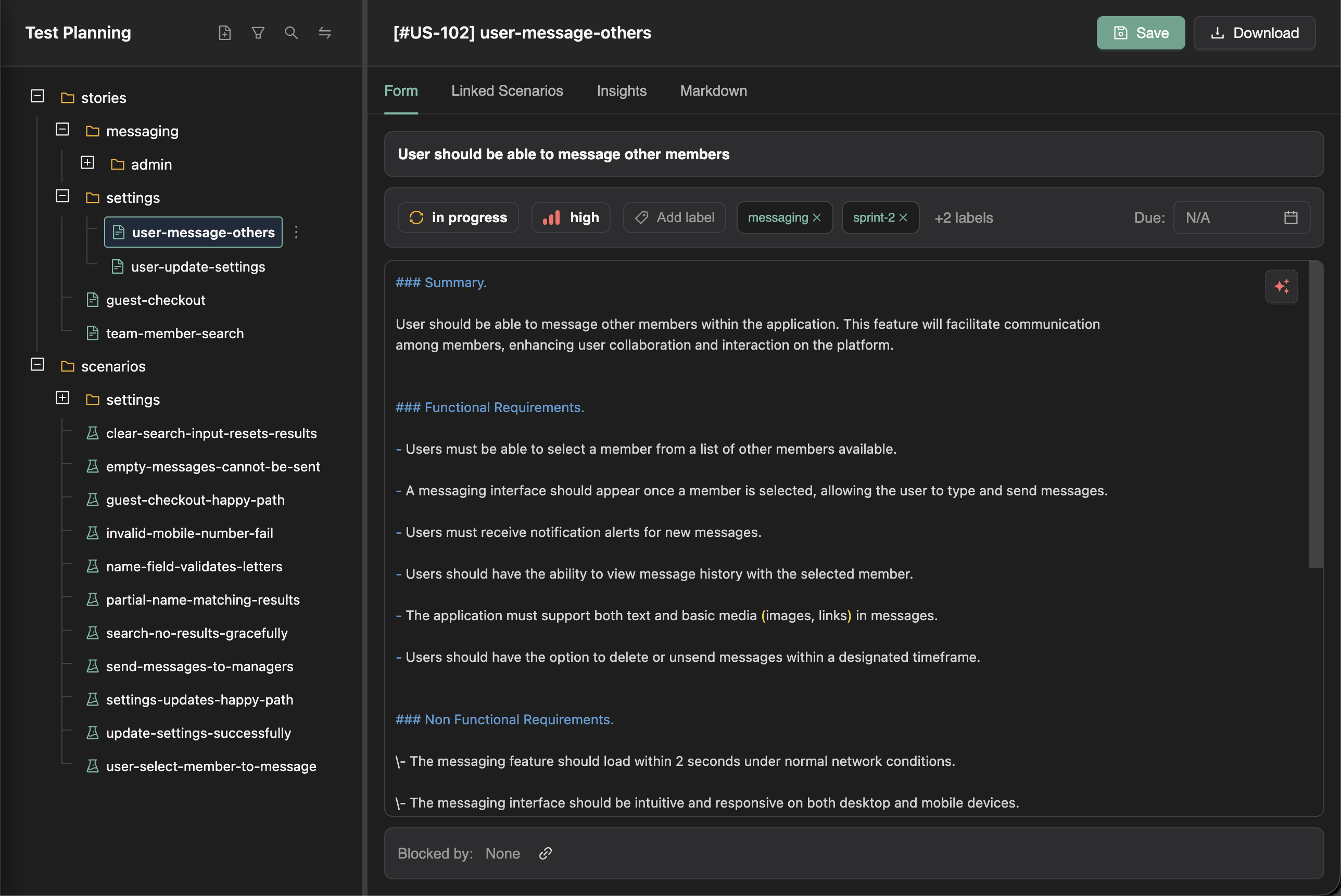

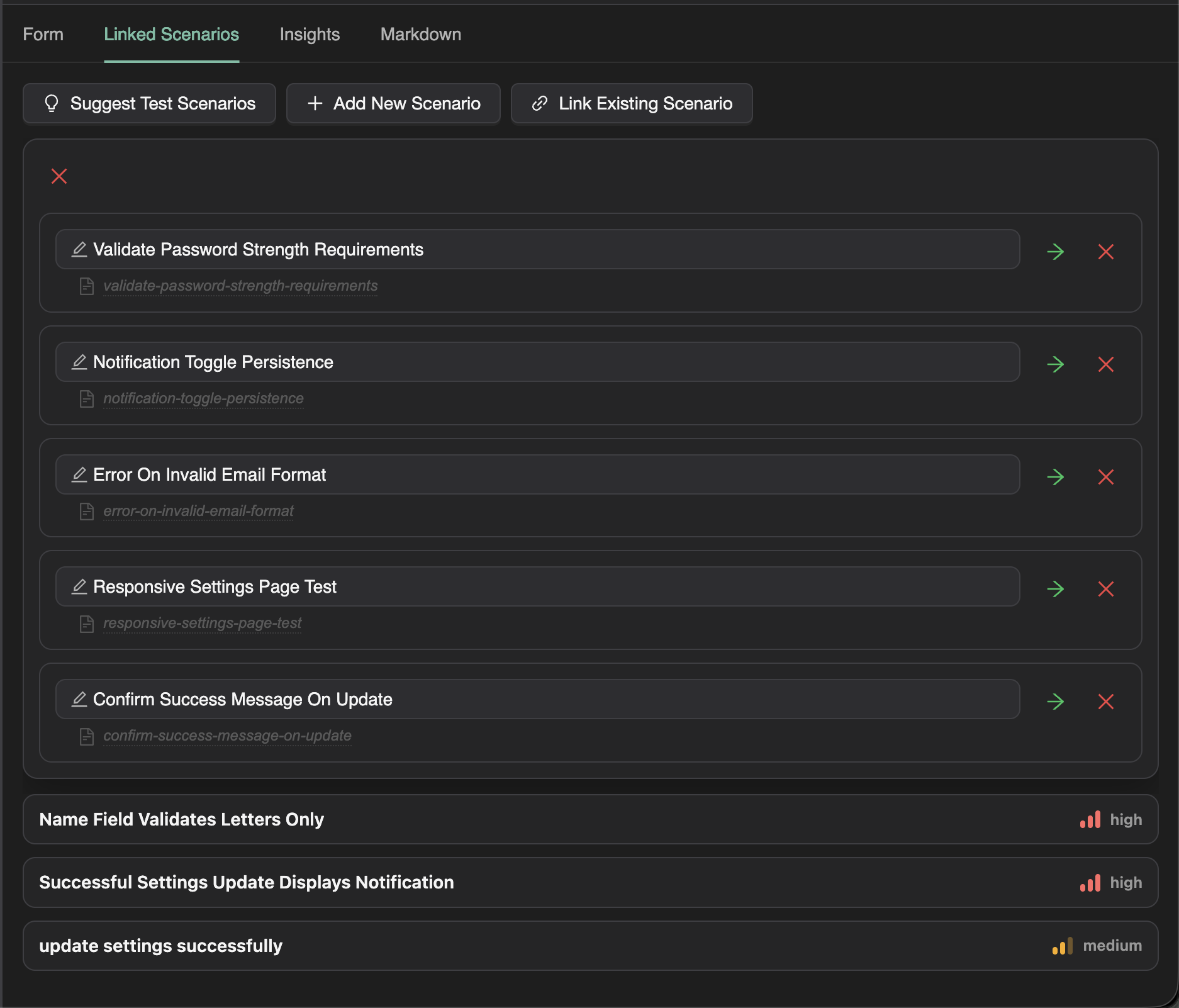

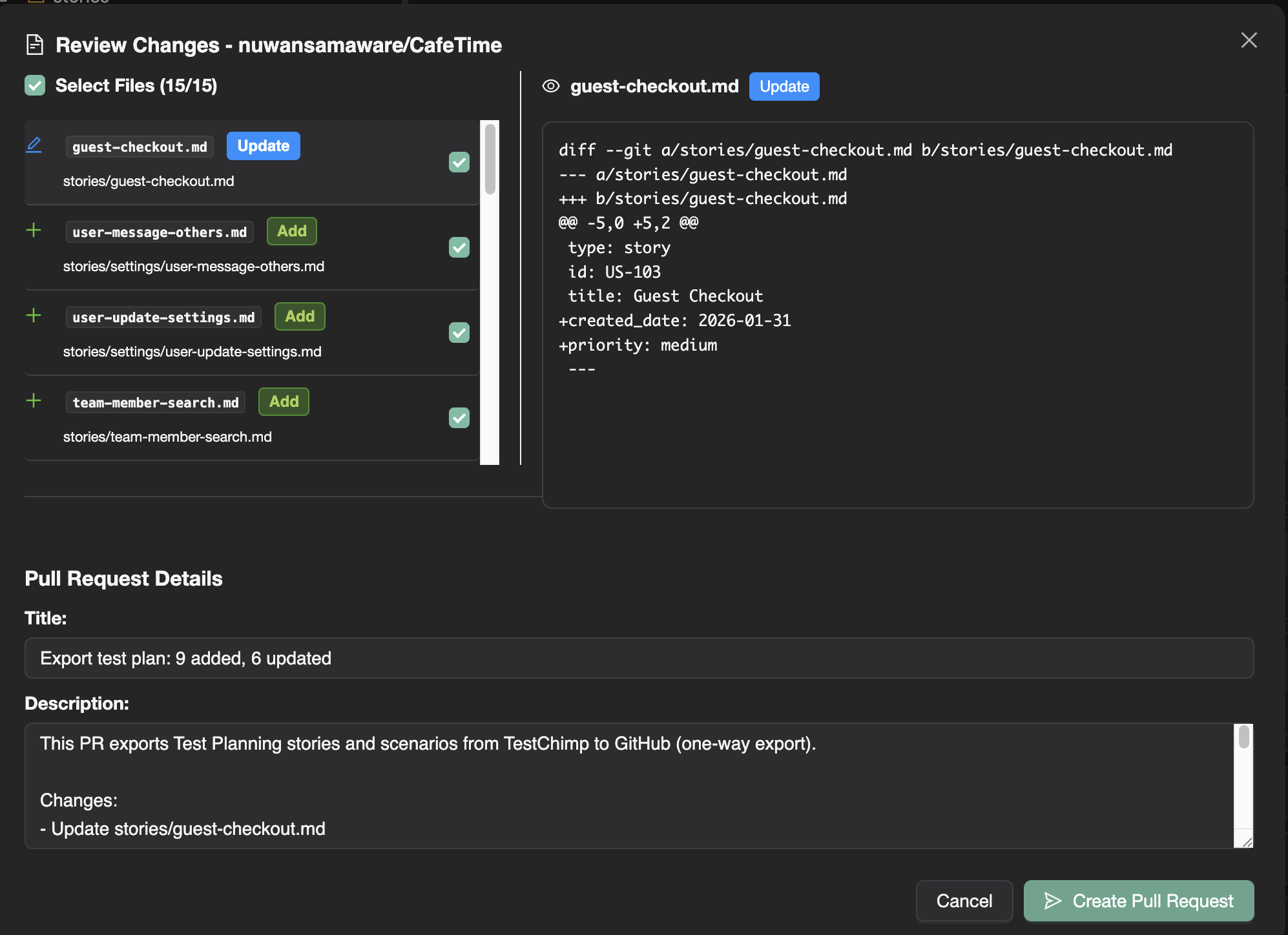

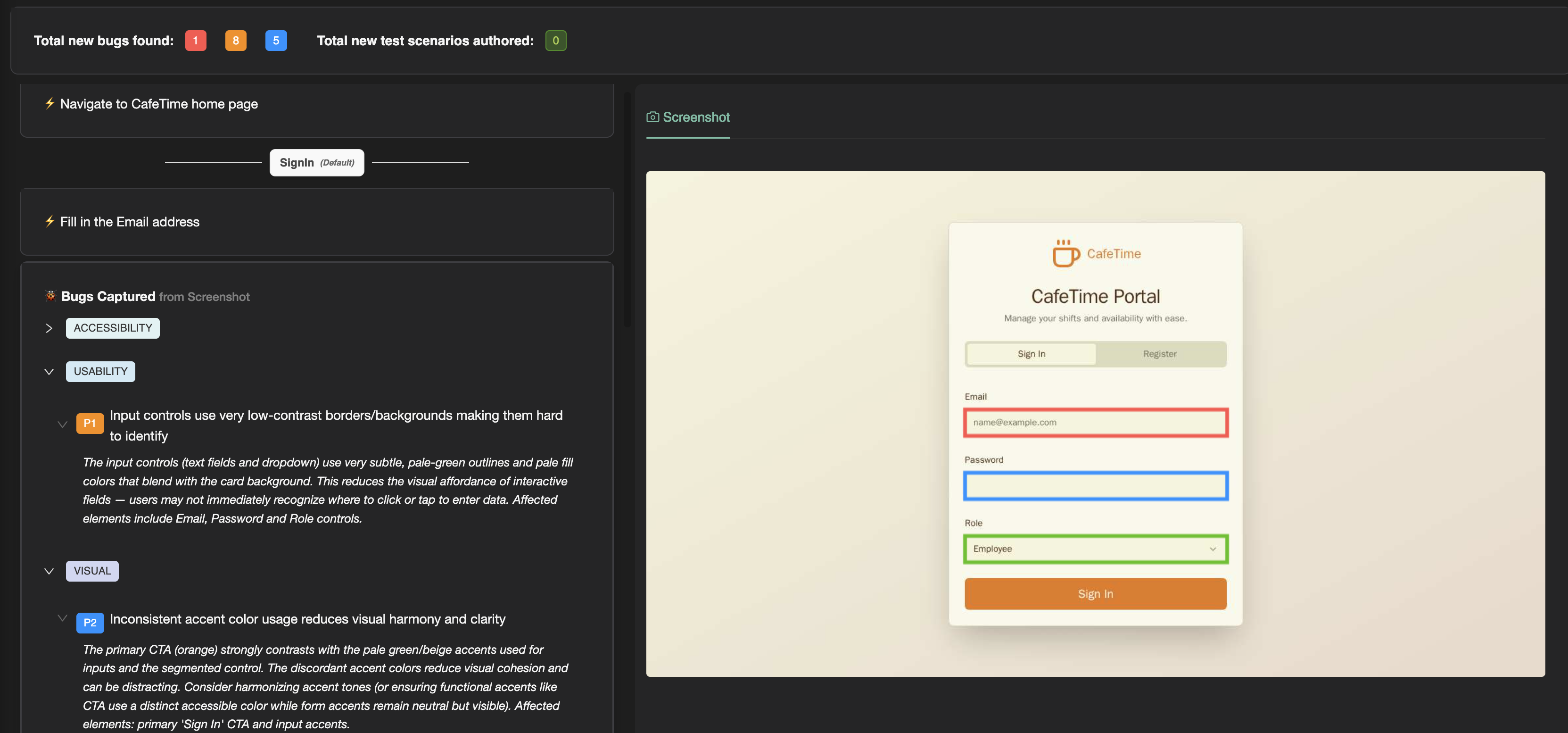

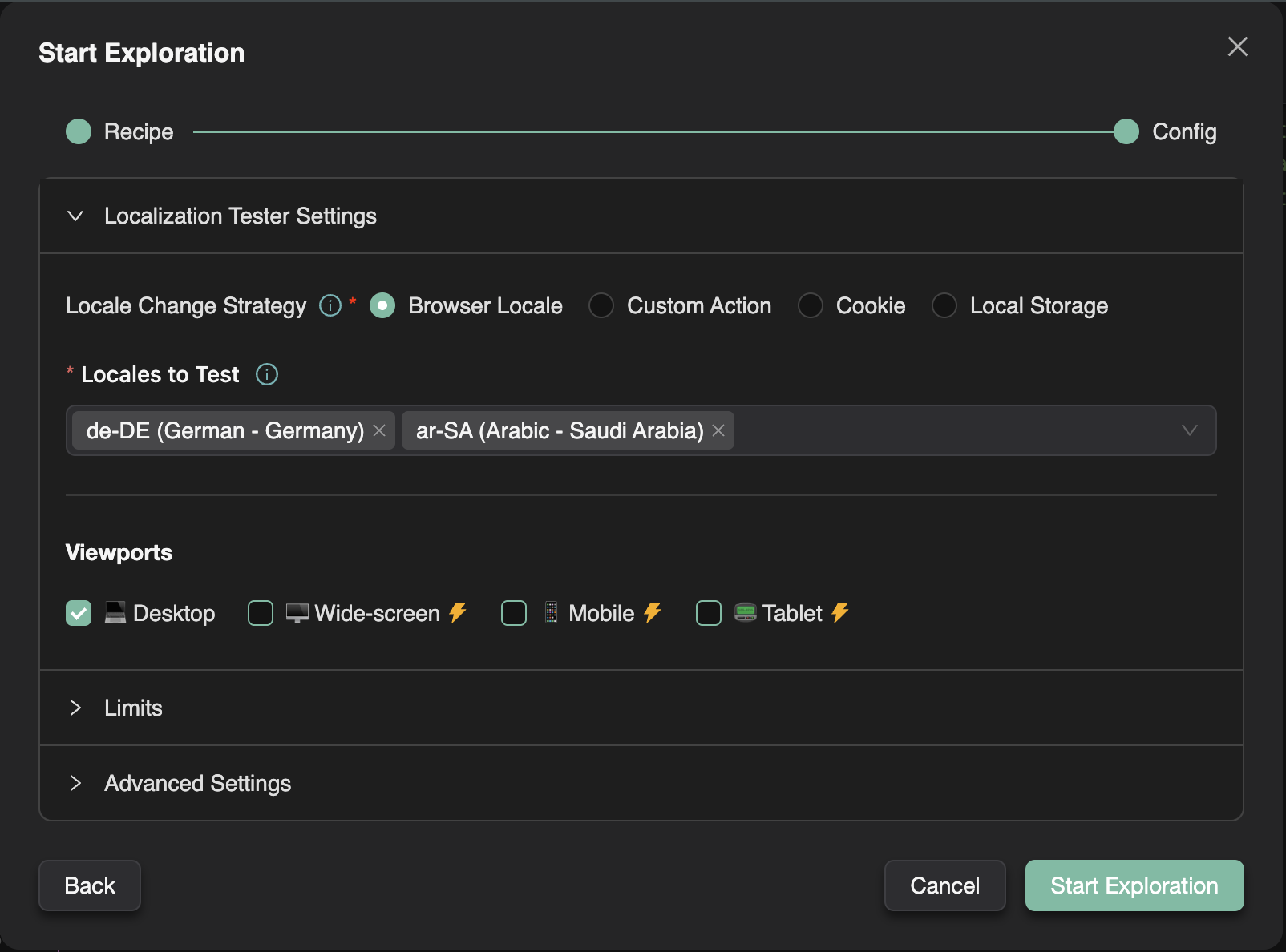

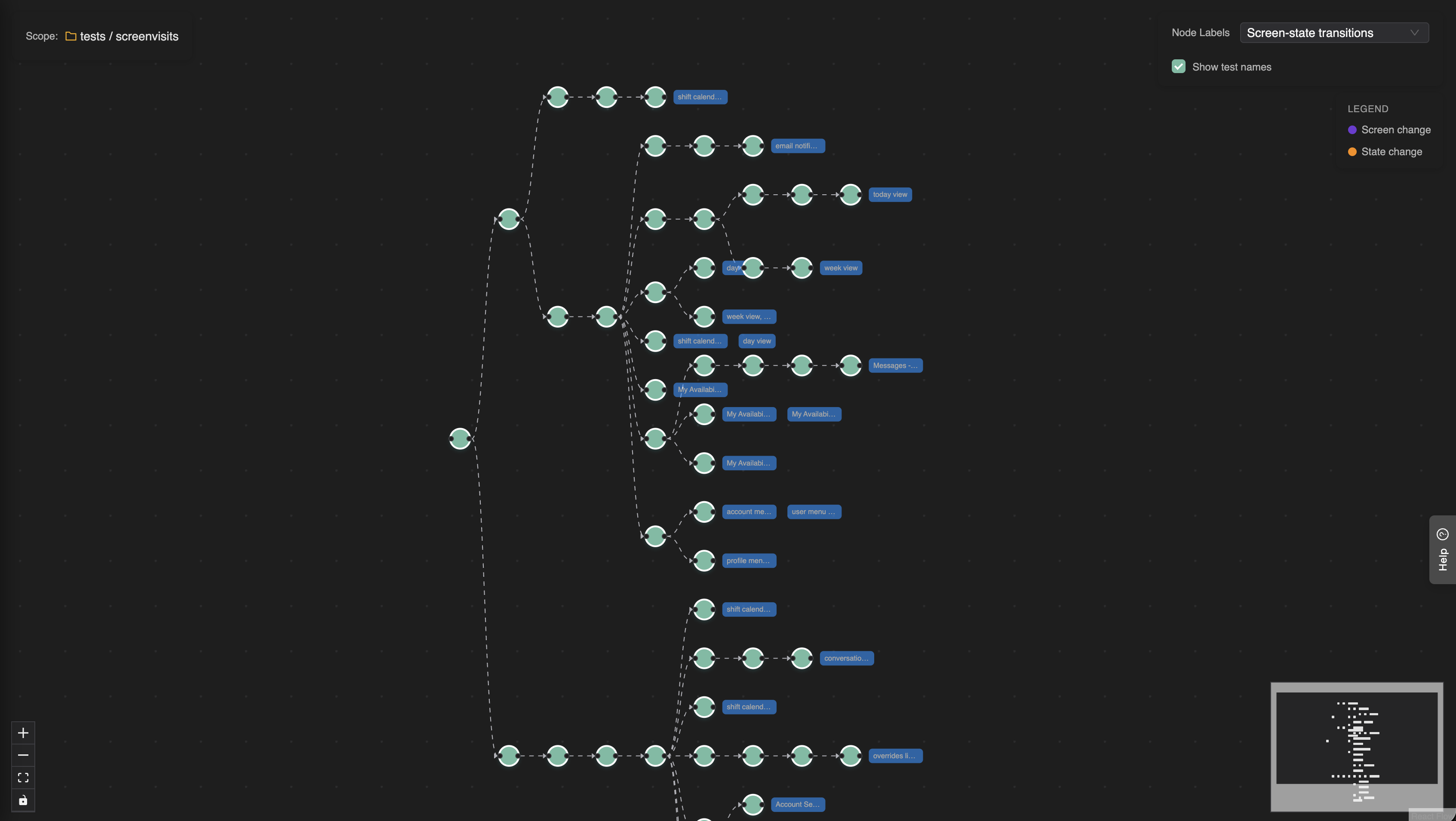

SmartTests: intent-based Playwright scripts

That tradeoff is what TestChimp SmartTests are designed around: intent-based Playwright scripts.

They are still scripts for the most part, with an extra capability when you need flexibility—the parts of the app that fight selector-based automation.

Instead of:

await page.locator('.anticon.anticon-plus-square.ant-tree-switcher-line-icon > svg').nth(1).click();

Now you can write:

await ai.act('Expand the tree displayed in the left pane');

Only where you need it—the brittle, shifting UI—not for every line of the test.

Because the tests remain Playwright-based, system variance is handled by the same patterns and tooling you already trust. Run them in ephemeral environments with controlled world-states, and you have a test suite with all 3 variances accounted for - a suite that you can trust.

And yes, we are cooking up something on the ephemeral-environment side too. Stay tuned...

References

The themes above—non-deterministic tests, UI-level flakiness, and mitigations—are well documented in both industry practice and research. These sources are a good starting point if you want to go deeper.

-

Martin Fowler, Eradicating Non-Determinism in Tests — A widely cited overview of why tests become non-deterministic (including async and shared-state issues) and how to structure tests to get repeatable results.

https://martinfowler.com/articles/nonDeterminism.html -

Google Testing Blog (George Pirocanac), Test Flakiness - One of the main challenges of automated testing (Dec 2020) — Describes categories of flakiness and why inconsistent automated tests slow development; follow-up posts in the same series expand on causes and responses.

https://testing.googleblog.com/2020/12/test-flakiness-one-of-main-challenges.html -

Google Testing Blog (John Micco), Flaky Tests at Google and How We Mitigate Them (May 2016) — Early, concrete account of flaky tests at scale, including mitigation strategies and discussion of where UI tests skew flaky.

https://testing.googleblog.com/2016/05/flaky-tests-at-google-and-how-we.html -

Wing Lam, Stefan Winter, Anjiang Wei, Tao Xie, Darko Marinov, Jonathan Bell, A Large-Scale Longitudinal Study of Flaky Tests, Proc. ACM Program. Lang. 4, OOPSLA, Article 202 (2020). Peer-reviewed study of when tests become flaky and how changes in code, tests, and dependencies contribute.

https://doi.org/10.1145/3428270

Conference entry: https://2020.splashcon.org/details/splash-2020-oopsla/78/A-Large-Scale-Longitudinal-Study-of-Flaky-Tests -

Microsoft Playwright, Auto-waiting (Actionability) — Official documentation for pre-action checks (visible, stable, receiving events, enabled, etc.) that reduce timing-driven failures.

https://playwright.dev/docs/actionability -

Microsoft Playwright, Assertions — Describes auto-retrying assertions (

expect) that wait until conditions hold, complementary to actionability for stable checks.

https://playwright.dev/docs/test-assertions -

Heroku / 12factor, Dev/prod parity (The Twelve-Factor App) — Classic framing for keeping development, staging, and production sufficiently aligned so “works in my environment” mismatches show up earlier; relevant when reasoning about world-state and shared environments.

https://12factor.net/dev-prod-parity -

Google Research (Diego Cavalcanti), De-Flake Your Tests: Automatically Locating Root Causes of Flaky Tests in Code at Google, ICSME 2020 — Empirical work on locating flaky-test root causes in code at Google scale; reports high accuracy for the proposed technique in their evaluation.

https://research.google/pubs/de-flake-your-tests-automatically-locating-root-causes-of-flaky-tests-in-code-at-google/