Introduction

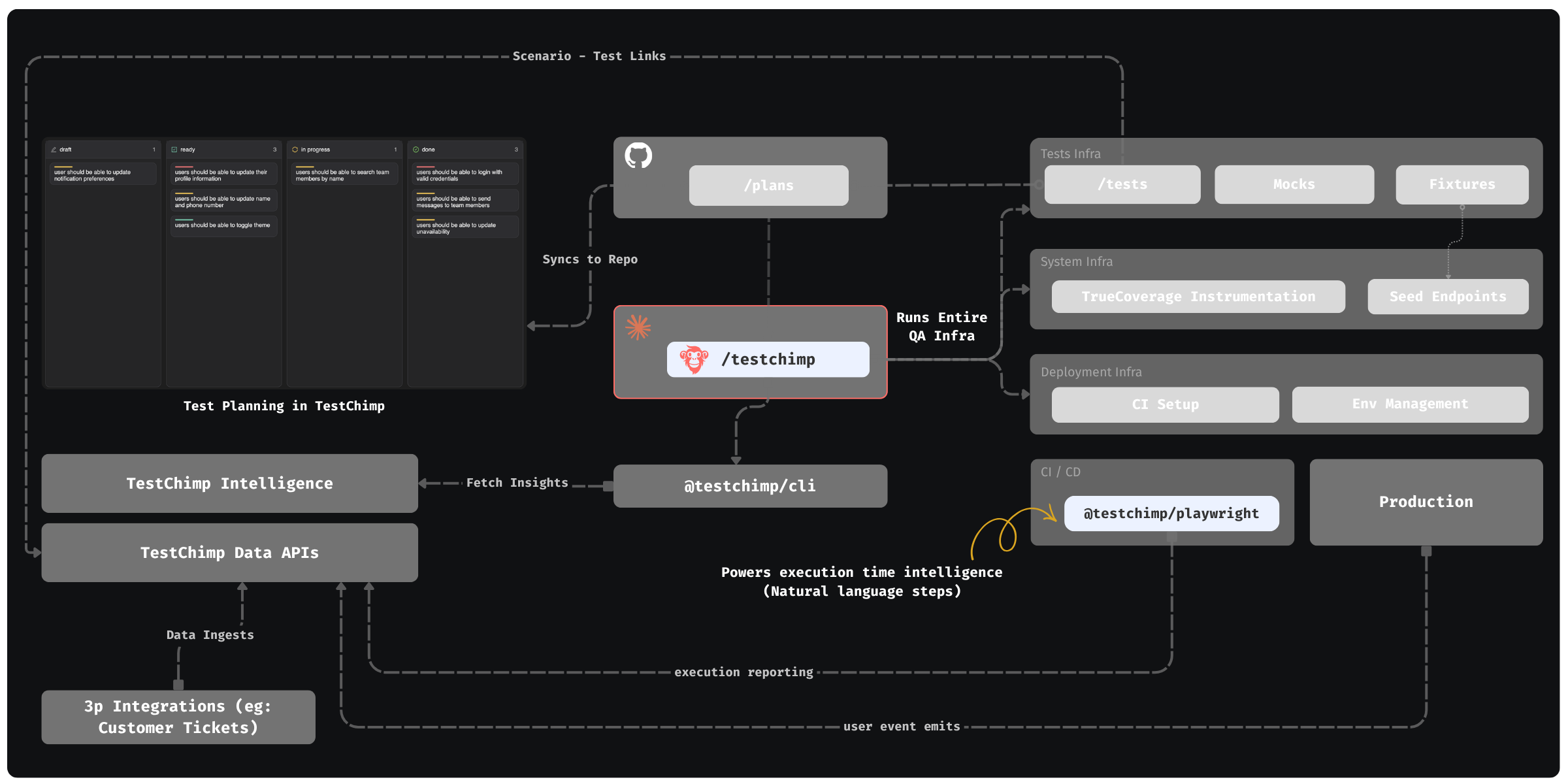

TestChimp is the QA workflow layer for AI agents: every phase of the QA workflow is managed and orchestrated in-code, so agents become first-class, active participants — not bolt-on helpers.

Result: fully autonomous QA that runs your QA infrastructure, authors and maintains tests, continuously monitors for coverage gaps, and closes product risks over time.

How it works

Test planning

Teams author user stories and scenarios in the TestChimp web app (or import from Jira / Linear) and get familiar human-friendly workflows: assign owners, set priorities, add labels, manage due dates, view and track work via Kanban boards etc.

Unlike traditional issue trackers, stories and scenarios are stored as Markdown, organized in folders, and synced to Git. They live next to your codebase, so Claude can pick up and work on them, author tests, and ensure requirement linking in tests.

What TestChimp + Claude enables

Controlled feedback loop

Claude—upskilled with TestChimp—works with the platform to orchestrate a tight control loop that:

- Tracks requirement coverage authored through in-code, comment-based links in tests.

- Tracks TrueCoverage: coverage aligned with real user behaviour, using user-event instrumentation (emitted from your app and correlated in TestChimp across production and test runs).

Together, that lets you continuously surface real gaps in testing and prioritize by business impact. For example, TestChimp can highlight drop-offs, dwell, and funnel position of certain events from real sessions; the agent can author tests for those paths, catch regressions, and run ExploreChimp—targeted UX analytics along the same SmartTest journeys (checkpointed with the markScreenState fixture)—either as part of /testchimp test (optional Phase 5), /testchimp evolve, or via /testchimp explore when exploration is the primary goal.

Enterprise-grade QA infrastructure

TestChimp installs and maintains an enterprise-grade QA harness autonomously, including:

- CI wired for automated SmartTest execution.

- Data seeding and teardown endpoint management (and read/probe helpers where your stack needs them).

- Playwright fixtures so environments can be brought to known, repeatable states—and kept aligned between authoring and execution.

- Mocking strategy — HTTP/API interception (for example

page.route) and optional LLM doubles when your app calls models—so tests stay stable without hiding real integration you care about. - Environment provisioning for PR-scoped, full-stack isolation - so agents can test in deterministic environments.

Outcomes:

- End-to-end tests before PRs merge, reducing regressions and the cost of fixes.

- More reliable tests through deterministic fixture-based setup shared between authoring and execution.

- QA infra built for agent-driven testing, not one-off scripts.

Test execution-time intelligence

Plain Playwright tests are tied to UI-selectors; even when an LLM writes the file, the result is still a “dumb” script that executes UI selector based commands - resulting in flaky tests.

TestChimp enables natural-language steps inside Playwright tests that run agentically at runtime (with ai.act, ai.verify, ai.extract). This allows Claude to "ship intelligence with the test" - so that the step executed by an agent at runtime. This improves robustness of the script + maintainability (since intent of the step is encoded in the test).

ExploreChimp: test-guided analytical agents

Run analytical agents that move through the app using your SmartTests as guides—the scripted journeys pull coverage into real flows while agents inspect sources: DOM, console, network, screenshot, and browser metrics to surface non-functional issues (performance, broken assets, harsh UX edges, usability, accessibility etc). That complements ordinary automation: tests cover the "functional issues" while the analytical agents flag the "non-functional issues" that affect your apps' UX.

Executive QA control plane

The TestChimp webapp is where humans oversee the agent-driven loop:

- Requirement coverage — Gaps aligned with business requirements (stories and scenarios).

- TrueCoverage — Coverage insights grounded in real user behaviour, for driving QA strategy optimized for business impact.

- Execution history — Identify which tests fail, which scenarios or journeys are at risk.

- Atlas — View non-functional issues captured by analytics agents - aligned on your apps' structure (screens, states).

Agent workflow

Install the TestChimp skill on Claude Code, Cursor, or any host that loads skills. Day to day you use these commands:

| Command | When |

|---|---|

/testchimp init | Once per repo — full QA harness bootstrap. |

/testchimp test | After implementation on a PR, before merge — PR-scoped QA (Analyze → Plan → Execute → Validate → optional ExploreChimp → Cleanup). |

/testchimp explore | ExploreChimp as the primary task — targeted UX analytics on chosen UI SmartTests (after markers and env are ready). |

/testchimp evolve | On a schedule or after events (for example deploys) — portfolio-level improvement across coverage, behaviour signals, and suite hygiene (optional TrueCoverage-driven ExploreChimp). |

Details:

- Init — Environment strategy for pre-merge E2E, seeding/teardown, Playwright fixtures, test scaffold, TrueCoverage wiring, CI.

- Test — Full phased workflow for the current PR; plans, tests, platform insights, provisioning, SmartTests, scenario links, optional Phase 5 ExploreChimp.

- Explore — Run ExploreChimp on a scoped set of UI specs; same analytics model as optional Phase 5 of

/testchimp test. - Playwright runtime plugin —

@testchimp/playwright: report runs to TestChimp, tag user events for TrueCoverage, ExploreChimp when enabled, run natural-language steps in CI and locally. - Evolve — Use coverage, execution, and behaviour signals to prioritize and execute improvements by impact, across the whole portfolio.

What the web app is for

TestChimp in the browser is primarily the human-facing surface to:

- Understand coverage gaps (requirements and real-user behaviour).

- Track testing progress over time.

- Run exploratory tests to find bugs.

- Review UX issues (including from Atlas) and coordinate fixes with agents.

- Author and manage test plans (stories and scenarios) and integrations.

You can author tests in the product (for example with the Chrome extension to capture steps); Claude / Cursor with the skill is the recommended path for day-to-day test authoring at scale.

Key benefits on top of using Claude alone

- Tight feedback loop — TestChimp orchestrates a feedback loop where production behaviour signals and requirement gaps feed in for autonomous QA improvement.

- ExploreChimp and exploratory agents — ExploreChimp runs targeted UX analytics along SmartTest pathways (DOM, screenshot, console, network, metrics) using

markScreenStatecheckpoints; exploratory agents in the product can similarly exercise flows and report issues across performance, visual, responsiveness, localization, accessibility, usability, and more. Humans can verify findings and hand fixes back to agents. - Proper QA infra setup — Shift-left E2E testing, World-state coordination between author and execution time, resulting in reliable tests, catching regressions earlier in SDLC - making fixes cheaper.

- Execution-time intelligence —

ai.act,ai.verify, and related commands bring AI into the test runtime where selectors alone are not enough. - Intelligence dashboards — One place to see and steer the whole QA process: bug findings aligned with app structure, and coverage gaps aligned with requirements and real user behaviour.

Git and the platform in practice

- Connect Git in the project and map two folders: one for plans (stories / scenarios in Markdown) and one for tests (SmartTests: Playwright on steroids).

- Plans are edited in the product and synced to the repo; agents read

plans/for scoping out testing for PRs. @testchimp/clilets the agent interact with TestChimp platform to get analytical insights, provision environments, and other orchestration hooks to drive the QA workflow autonomously.

See also

- Test planning intro — Markdown plans and Git sync.

- Smart Tests intro — Playwright,

ai.*steps, linking scenarios. - Screen-State Annotations —

markScreenStatefixture and Atlas workflow for ExploreChimp. - TrueCoverage intro — Behaviour-aligned coverage.

- QA Intelligence — Dashboards and analytics.